If you want to analyze Japanese texts digitally, the first problem you might run up against is that Japanese does not use spaces between words. A computer needs those spaces to know when one word ends and the next begins. So, you first need to be able to “tokenize” those words, that is determine the words. Deciding what is a word and what is not is difficult to decide. Is a verb ending a word or a part of a word? Linguists discuss these kinds of questions for us literary scholars and have created the necessary tools so that we don’t have to insert spaces manually into a text. Imagine how much work that would be!

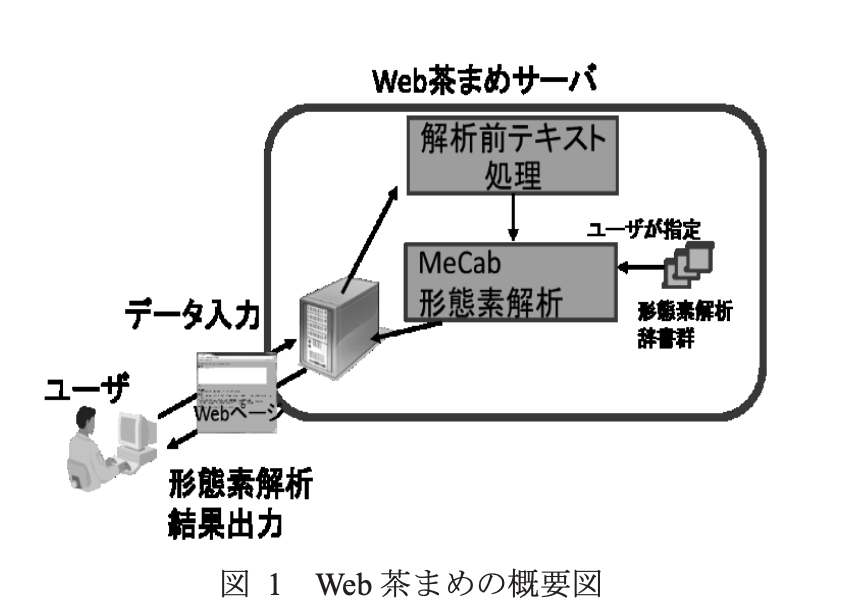

One way to see how computers can tokenize words (without installing anything on your own computer) is to use WebChamame. This site was built by researchers at the National Institute of Japanese Language and Linguistics. To get started, type a Japanese sentence into the left window on the WebChamame site.

I used this first line from the Tale of Genji by Murasaki Shikibu:

いづれの御時にか、女御、更衣あまたさぶらひたまひけるなかに、いとやむごとなき際にはあらぬが、すぐれて時めきたまふありけり。

Source: JTI

Then, where it says “dictionary selection” 辞書選択 (jisho sentaku) you have to choose what type of Japanese your text is from a range of options. The dictionaries WebChamame uses are the UniDic digital dictionaries compiled by the National Institute of Japanese Language and Linguistics. They include dictionaries for different time periods but also for written and oral language use. In this case, using text from the Tale of Genji, we have to choose the dictionary for Japanese of the Heian period labelled 中古和文 (chūko wabun). For now, leave the rest of the settings as they are and click on “analyze” 解析する (kaiseki suru).

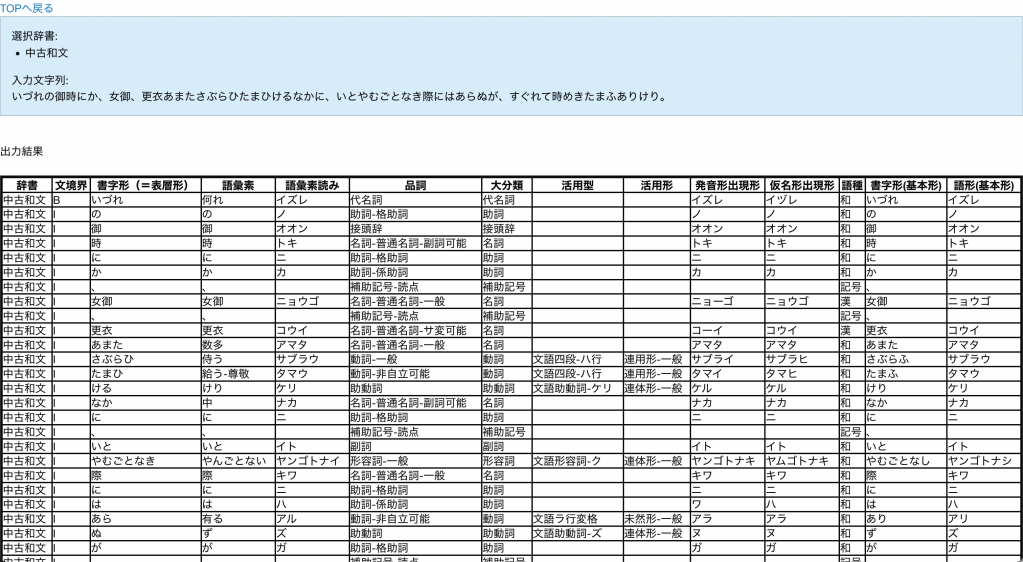

You will land on a page that shows which dictionary you selected and your text followed by a large table breaking your text down into words and grammatical elements. It also provides perhaps more information than you need about each item.

Starting from the left, it tells you which dictionary was used to analyze the item, in this case the 中古和文 dictionary. In the third column with the title “written form (=surface form)” 書字形(=表層形)shojikei (=hyōsōkei), you will see the word as it appears in the source text. The next two columns give you the dictionary form called “lexeme” 語彙素 goiso and its “reading” 語彙素読み goiso yomi. These are followed by a column listing the “part of speech” 品詞 hinshi, that is noun, pronoun, verb, etc. One column entitled “conjugation pattern” 活用型 katsuyōgata even gives you specific information about each verb conjugation. Another interesting column is the third from the right entitled “word classification” 語種 goshu, which tells you if the word is of Japanese or Chinese origin.

Try putting in other texts from the Japanese Text Initiative or another source. To make sure your text is parsed properly, though, be sure to choose the right dictionary. If you’re not sure which dictionary is right, you can select a few and compare the results. You can also have WebChamame produce your results in the form of an Excel file, which will automatically download to your computer.

WebChamame does all of the analysis for you, but that does not mean it is the right tool for every task. For those of us interested in natural language processing to compute statistics about Japanese texts, it is rather inconvenient to pore over Excel files.

A lot of statisticians use the computer language R to analyze data, and it is also possible to analyze Japanese texts using R. To do that, however, you not only need to be able to use R. You also need to install MeCab and RMeCab on your computer, and that will require another post.

Bibliography

Den, Yasuharu, Toshinobu Ogiso, Hideki Oguro, Atsushi Yamada, Nobuaki Minematsu, Kiyotaka Uchimoto, and Hanae Koiso. 2007. “Kōpasu Nihongo no tame no gengo shigen: Keitaisokaisekiyō denshika jisho no kaihatsu to sono ōyō.” Nihongo kagaku 22 (October): 101–23. https://doi.org/10.15084/00002185.

Tsutsumi, Tomoaki, and Toshinobu Ogiso. 2015. “Rekishiteki shiryō wo taisho to shita fukusū no UniDic jisho ni yoru keitaisokaiseki shien tsūru ‘WebChamame.’” In Jinbun kagaku to konpyūta shinpojiumu. http://id.nii.ac.jp/1001/00146542/.